In the era of AI and machine learning, traditional databases struggle with a fundamental challenge: understanding similarity and semantic meaning. Vector databases have emerged as the solution, powering everything from recommendation systems to RAG (Retrieval Augmented Generation) applications.

🔍 The Problem: Finding Similar Items

Imagine you're building an e-commerce platform. A user searches for "comfortable running shoes for marathons". Traditional keyword-based search would only find exact matches for these specific words. It would completely miss:

- ❌"Long-distance athletic footwear with cushioning"

- ❌"Marathon trainers with ergonomic support"

- ❌"Endurance running sneakers"

These products are semantically similar but use different words. Traditional databases don't understand meaning – they only match exact text.

💡 The Core Challenge

How do we find items that are conceptually similar even when they use completely different words? How do we measure "closeness" in meaning, not just in text?

🕰️ Before Vector Databases: The Old Approaches

Before vector databases, developers relied on several approaches, each with significant limitations:

Keyword Search

Matching exact words or using stemming/lemmatization

Limitations:

- • Misses synonyms

- • No context understanding

- • Language-dependent

Boolean Filters

Complex AND/OR/NOT queries with metadata

Limitations:

- • Too rigid

- • Requires exact schema

- • Poor user experience

Full-Text Search

tf-idf, BM25 scoring algorithms

Limitations:

- • Still keyword-based

- • No semantic meaning

- • Ranking issues

⚠️ The Missing Piece

None of these approaches could truly understand semantic similarity – the ability to know that "happy" and "joyful" are related, or that a picture of a dog is similar to other dog pictures.

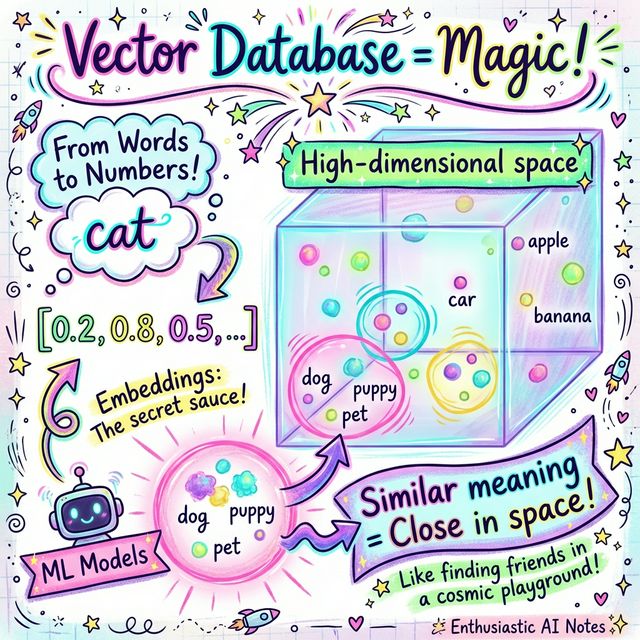

🚀 What is a Vector Database?

A vector database stores data as high-dimensional numerical vectors (arrays of numbers) and enables ultra-fast similarity search based on mathematical distance.

📐 Key Concept: Embeddings

Embeddings are numerical representations of data (text, images, audio) generated by machine learning models. Similar items have similar embeddings.

"kitten" → [0.19, 0.79, 0.51, -0.29, 0.09, ...] (similar!)

"car" → [-0.5, 0.1, -0.2, 0.7, -0.4, ...] (different)

🌌 High-Dimensional Space

Vectors typically have hundreds or thousands of dimensions (e.g., 768, 1536, 3072). In this space, similar concepts cluster together, and distance between vectors represents semantic similarity.

🔧 Popular Vector Databases

Pinecone

Fully managed

Weaviate

Open source

Chroma

Lightweight

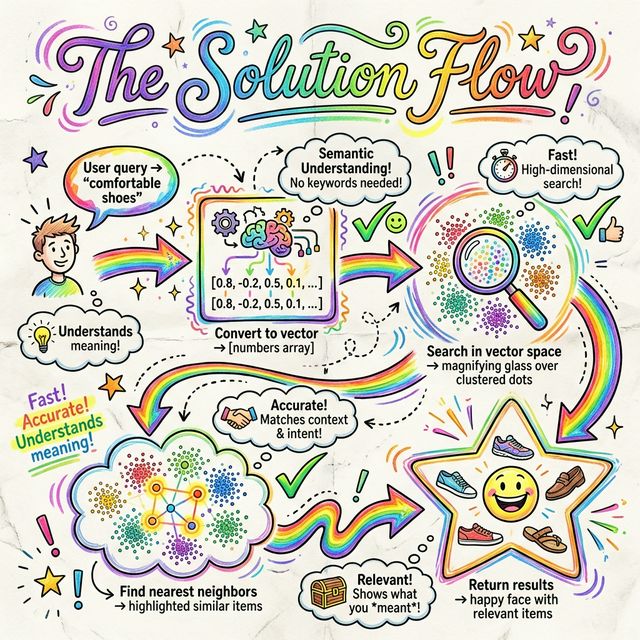

✅ How Vector Databases Solve the Problem

Convert Query to Vector

User's search query is converted to a vector using the same embedding model used for the data.

Similarity Search

The database performs a k-nearest neighbors (k-NN) search to find vectors closest to the query vector using distance metrics like cosine similarity or Euclidean distance.

Return Relevant Results

The most similar items are returned, ranked by distance. These results are semantically relevant even if they use different words!

🎯 The Magic

Vector databases can find "comfortable running shoes" even when products are described as "ergonomic marathon footwear" because the embeddings capture meaning, not just words!

💼 Applications & Trade-offs

✨ Real-World Applications

- 🛒

Recommendation Systems

Find products similar to user preferences

- 🔍

Semantic Search

Search by meaning, not keywords

- 🖼️

Image/Video Search

Find similar visual content

- 🤖

RAG Systems

Retrieval for AI chatbots and assistants

- 🚨

Anomaly Detection

Identify unusual patterns in data

⚠️ Challenges & Considerations

- 💰

Storage Costs

Vectors require more storage than text

- 🧮

Computational Complexity

k-NN search can be expensive at scale

- 🛠️

Index Maintenance

Requires periodic reindexing

- 📚

Learning Curve

Understanding embeddings and distance metrics

- 🎯

Model Selection

Choosing the right embedding model matters

💡 Best Practice

For most AI applications, the benefits of semantic understanding far outweigh the costs. Start small, measure performance, and scale as needed.

🎯 The Bottom Line

Vector databases are the essential infrastructure powering modern AI applications. They bridge the gap between human language/concepts and computer understanding, enabling truly intelligent search and recommendations.

Whether you're building a chatbot, recommendation engine, or semantic search system, vector databases are your foundation for success.

Want to learn more about AI and vector databases?

← Back to All Articles